DEEPSEEK OPEN MODELS: WORTH A BACKEND/RAG BENCHMARK

A community post claims a free "DeepSeek V3.2" outperforms top closed models, but the source provides no verifiable details. Regardless, DeepSeek’s open models ...

A community post claims a free "DeepSeek V3.2" outperforms top closed models, but the source provides no verifiable details. Regardless, DeepSeek’s open models are mature enough to justify a brief, task-focused benchmark on code generation, test scaffolding, and RAG to gauge quality, latency, and cost. Treat the specific claim as unverified until confirmed by official docs.

Open models can cut inference cost and reduce vendor lock-in for backend workflows.

On-prem or VPC hosting improves data control and compliance for code and pipeline artifacts.

-

terminal

Compare code-gen quality, JSON adherence, and function/tool-calling on your top repo tasks; track pass rate and token cost.

-

terminal

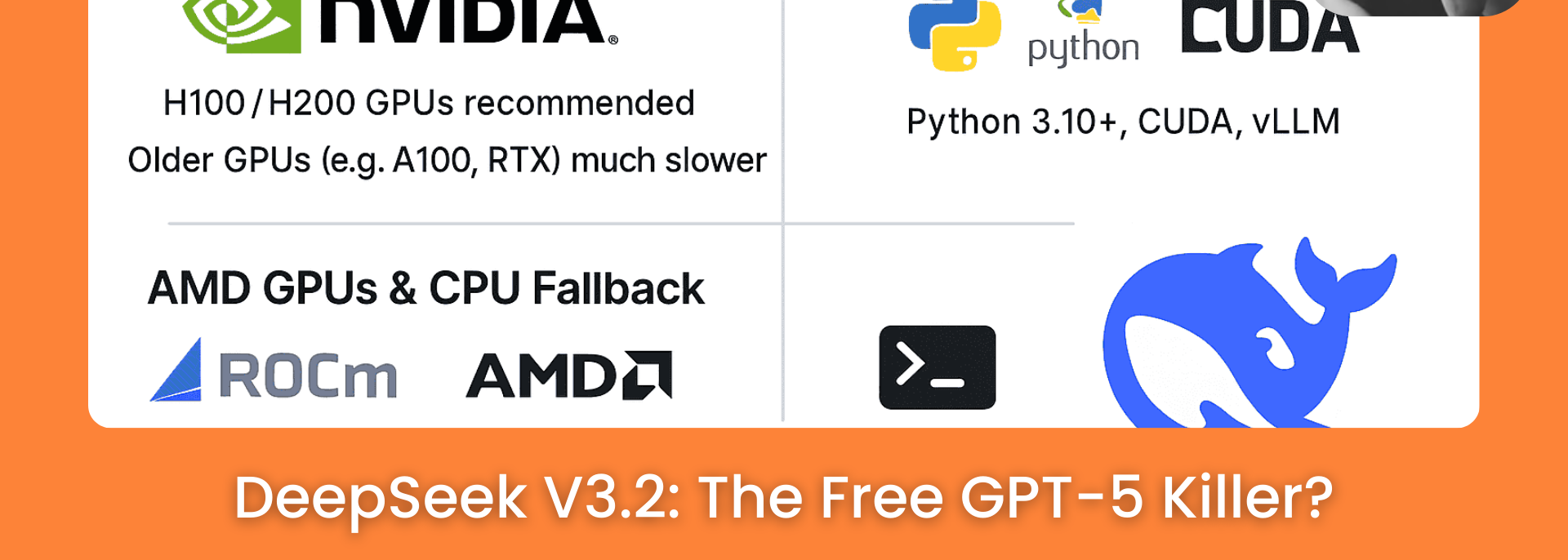

Load-test latency/throughput via vLLM/Ollama and verify context window, truncation behavior, and streaming stability.

Legacy codebase integration strategies...

- 01.

Pilot an OpenAI-compatible swap (DeepSeek via vLLM/Ollama) behind a feature flag in staging and run regression suites on codegen/tests/RAG.

- 02.

Validate tokenization and context-length differences, and adjust guardrails/retries for stricter JSON and schema conformance.

Fresh architecture paradigms...

- 01.

Abstract model calls behind a provider interface with schema-enforced outputs (e.g., Pydantic/JSON Schema) and deterministic prompts.

- 02.

Ship an evaluation harness in CI from day one with golden prompts and dashboards tracking quality, cost, and latency.