ON-DEVICE LLMS: RUNNING MODELS ON YOUR PHONE

A hands-on guide shows how to deploy and run a compact LLM directly on a smartphone, outlining preparation of a small model, on-device runtime setup, and practi...

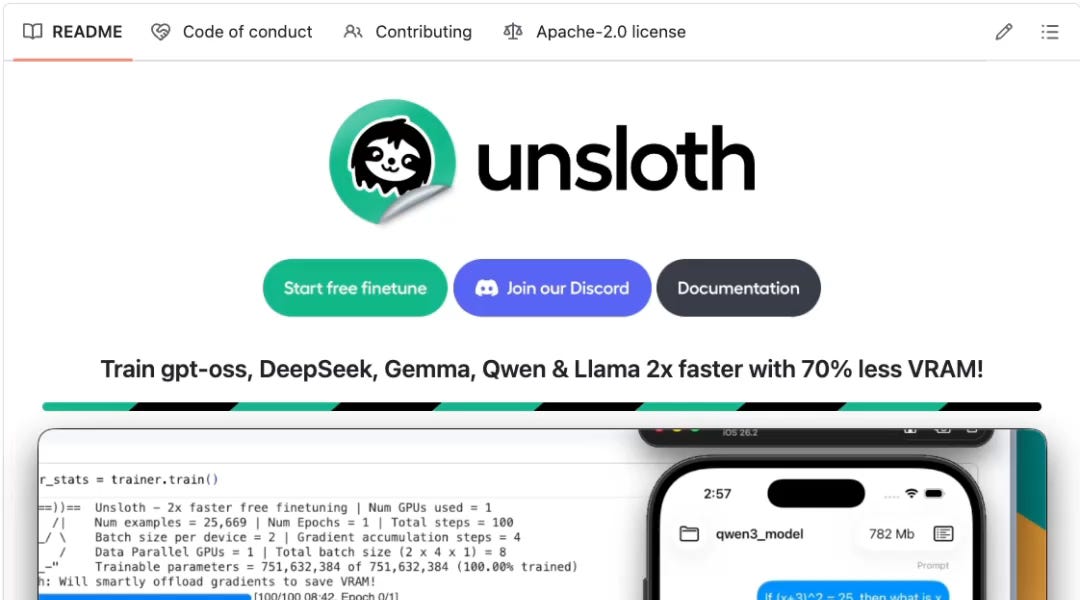

A hands-on guide shows how to deploy and run a compact LLM directly on a smartphone, outlining preparation of a small model, on-device runtime setup, and practical limits around memory, thermals, and latency. For backend/data teams, this validates edge inference for select tasks where low latency, privacy, or offline capability outweighs the accuracy gap of smaller models.

On-device inference can cut tail latency and cloud costs while improving privacy for sensitive prompts.

Edge+cloud split becomes a viable architecture: small local models for fast paths, server models for complex fallbacks.

-

terminal

Benchmark token throughput, latency, and battery/thermal behavior across 4-bit vs 8-bit quantization on target devices.

-

terminal

Validate functional parity and fallback logic between on-device and server models, including prompt compatibility and safety filters.

Legacy codebase integration strategies...

- 01.

Introduce an edge-inference feature flag and A/B test routing some requests to on-device models with telemetry for quality and SLA impact.

- 02.

Plan model distribution, versioning, and license compliance in your mobile release pipeline, and cache/purge strategies for weights.

Fresh architecture paradigms...

- 01.

Design a mixed edge/cloud architecture from day one with clear model selection rules, offline modes, and privacy-by-default data handling.

- 02.

Choose a mobile-friendly runtime and quantized model format early, and standardize benchmarks for device classes you support.