ON-DEVICE LLMS: RUNNING MODELS ON YOUR PHONE

A hands-on guide shows how to deploy and run a compact LLM directly on a smartphone, outlining preparation of a small model, on-device runtime setup, and practi...

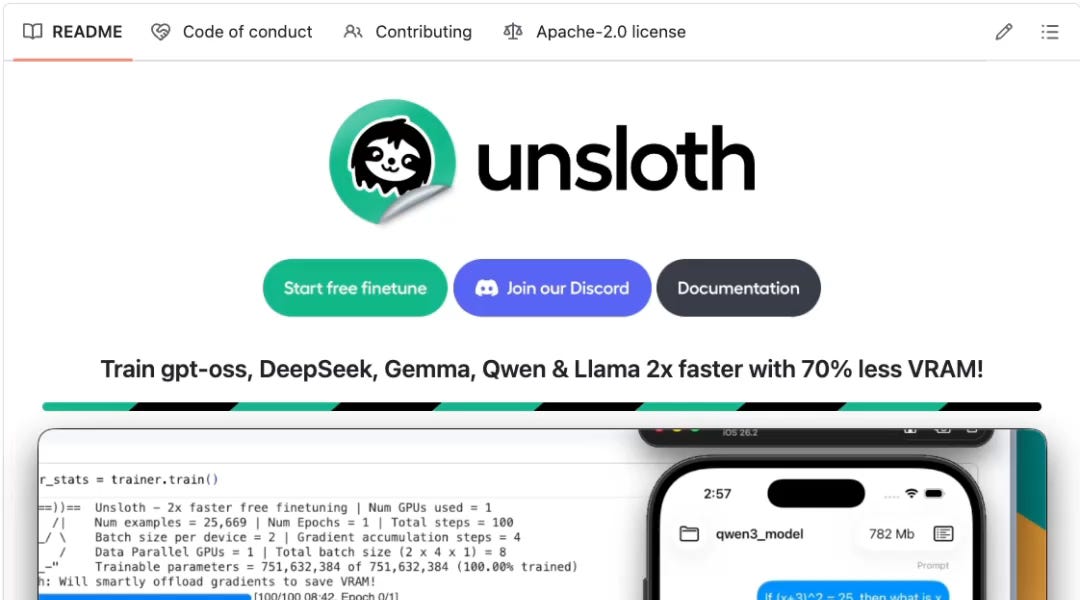

A hands-on guide shows how to deploy and run a compact LLM directly on a smartphone, outlining preparation of a small model, on-device runtime setup, and practical limits around memory, thermals, and latency. For backend/data teams, this validates edge inference for select tasks where low latency, privacy, or offline capability outweighs the accuracy gap of smaller models.

On-device inference can cut tail latency and cloud costs while improving privacy for sensitive prompts.

Edge+cloud split becomes a viable architecture: small local models for fast paths, server models for complex fallbacks.

-

terminal

Benchmark token throughput, latency, and battery/thermal behavior across 4-bit vs 8-bit quantization on target devices.

-

terminal

Validate functional parity and fallback logic between on-device and server models, including prompt compatibility and safety filters.