IBM SHIPS OPEN 32K-CONTEXT MULTILINGUAL EMBEDDINGS THAT PUNCH ABOVE THEIR SIZE

IBM released Granite Embedding Multilingual R2, open multilingual embedding models with 32K context that improve retrieval quality at small model sizes. Two Ap...

IBM released Granite Embedding Multilingual R2, open multilingual embedding models with 32K context that improve retrieval quality at small model sizes.

Two Apache 2.0 models land: a 97M embedder that tops sub‑100M multilingual retrieval and a 311M model near the top under 500M, both with Matryoshka dims, 32K context, 200+ languages, and code retrieval across 9 languages details. They drop into sentence‑transformers, LangChain, and LlamaIndex.

If your corpora mix natural language and code, better cross‑lingual/cross‑register embeddings may cut weird language drift and boost recall (see this code‑mixed language drift case study: analysis).

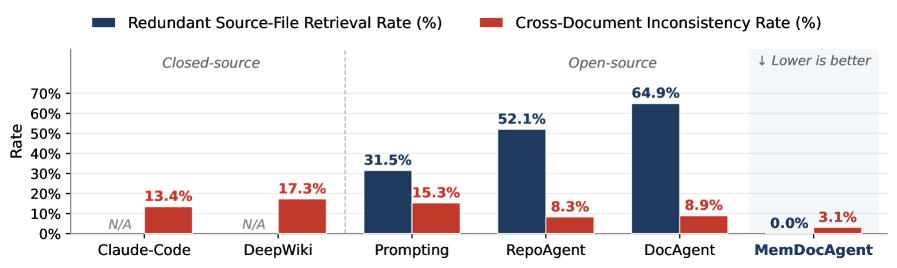

Stronger embeddings also help long‑horizon agent/documentation tools that rely on consistent repo‑level context (MemDocAgent, code) and fit the trend that inference design (retrieval, chunking, routing) now drives quality as much as the model itself perspective.

Embeddings gate retrieval quality; open 32K multilingual models let you index longer docs and reduce cross‑language misses without paying for huge models.

Matryoshka dims give you a latency/quality dial without reindexing, useful for multi‑tier search and cost control.

-

terminal

A/B your current embedder vs Granite R2 (97M and 311M) on multilingual and code‑mixed queries; track NDCG/MRR/Recall@k and latency/cost.

-

terminal

Rechunk longer documents to exploit 32K context during embedding; measure duplicate hit rates and context window waste in RAG responses.

Legacy codebase integration strategies...

- 01.

Plan a side-by-side reindex in a shadow index; Matryoshka enables reducing vector dims later without a full reindex.

- 02.

Audit language drift on mixed Chinese/English code queries before/after swap; watch for query translation artifacts and ranker interactions.

Fresh architecture paradigms...

- 01.

Pick 97M for low-latency APIs; 311M for higher recall on noisy multilingual corpora. Set vector dims to match Matryoshka targets.

- 02.

Design retrieval to separate code vs prose namespaces; use reranking only if recall gaps remain.

Get daily IBM + SDLC updates.

- Practical tactics you can ship tomorrow

- Tooling, workflows, and architecture notes

- One short email each weekday